QA OUTSOURCING FOR ENTERPRISE CTOs

- April 30, 2026

- Nabeesha Javed

The Decision Playbook (2026)

Let’s skip the setup. You’re not here to learn that outsourcing QA exists, or to read another article with a stock photo of people pointing at a whiteboard. You’re here because something in your release process is breaking down and you’re trying to figure out if handing QA to an external team will fix it or just move the problem somewhere harder to see.

That’s an honest question. Here’s an honest answer.

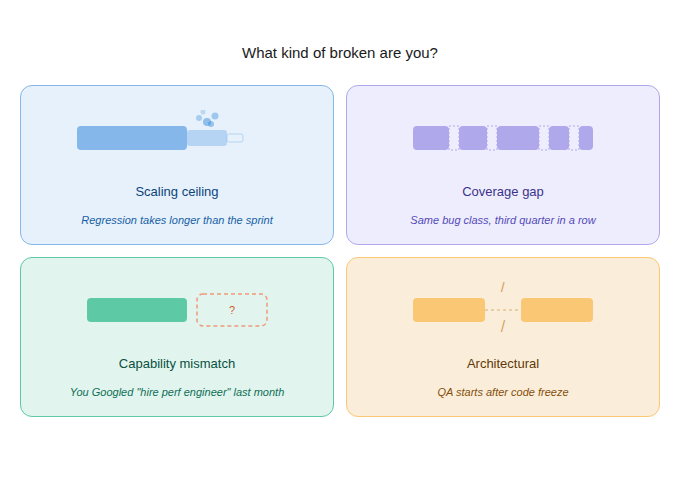

First: What Kind of Broken Are You?

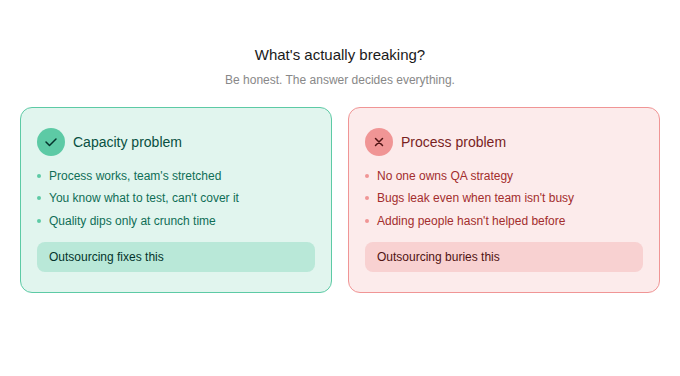

Not all QA dysfunction looks the same, and outsourcing is not the same fix for all of it. Before you evaluate a single vendor, you need to be clear on which of these is actually your problem.

The scaling ceiling problem

If your QA team isn’t incompetent. Your process isn’t being ignored. But your codebase grew 40% in 18 months, and your regression cycle grew with it, except your release cadence didn’t slow down to match. You’re shipping on a schedule that your testing infrastructure wasn’t built for.

The coverage gap problem

You have QA. You even have automation. But the same defect classes keep appearing in production: payment edge cases, session handling failures, API schema mismatches. Your testing is finding failures in the obvious paths and missing the ones that actually hurt users.

The capability mismatch problem

Your roadmap now requires performance engineering under realistic load, security testing, or AI-assisted test automation. None of those were in scope when you built your QA function, and your team wasn’t hired for them. You can’t build that capability fast enough internally.

The architectural problem

89% of enterprise engineering teams have adopted CI/CD pipelines (ThinkSys QA Trends, 2026). If your QA team is still operating as a post-sprint quality gate, you don’t have a testing problem. You have a structural mismatch between how you ship and how you verify. That’s the one outsourcing actually solves fastest.

| Outsourcing fixes structural gaps. It doesn’t fix process problems you haven’t defined yet. If you can’t articulate which of the above describes your situation, stop here and do that audit first. |

The Numbers You Already Know But Haven’t Priced Out

You’ve seen the IBM stat about bugs costing 100x more in production than in design. What’s more useful is running it against your actual context.

| Metric | Number |

| Critical app downtime cost per hour (Gartner) | $300,000+ |

| Annual cost of poor software quality, US (CISQ 2024) | $3.1 trillion |

| Engineer sprint capacity lost to rework and bug fixes | 30 to 50% |

| Cost reduction from outsourced QA over 3 years (Capgemini, 2025) | 40 to 60% |

| QA market projected size by 2035 | $101 billion |

For a fintech platform processing trades, one hour of downtime during market hours can cost millions. For an e-commerce operation doing $500K per day, a checkout bug that runs undetected for a week bleeds $70K directly. The budget conversation about outsourcing QA should never start with ‘what does it cost’ and should always start with ‘what is our current defect leakage costing us.’

Should You Outsource? The Three Conditions That Make It the Right Call

Outsourcing is not universally the right answer. It’s the right answer under specific conditions. Here are the three that actually hold up in practice.

1. You need a capability you can’t build fast enough internally

The 2025 World Quality Report (Capgemini) found that 50% of organizations lack AI and machine learning expertise within their QA teams. That number hasn’t changed year over year, which tells you hiring for it isn’t moving fast enough for most organizations. QA roles requiring AI testing or compliance expertise now carry a 20 to 40% salary premium. If your roadmap requires performance engineering, security testing, or AI-assisted test automation in the next two quarters, building that in-house realistically takes 12 to 18 months. A mature outsourcing partner brings it operational in weeks.

2. Your release velocity is already past your test coverage

This is the CI/CD timing problem. If your pipeline moves and your QA doesn’t, every sprint compounds the gap. Restructuring your internal QA team to embed into sprints takes organizational change that usually takes longer than the release calendar allows. Bringing in an external team that already operates this way is faster.

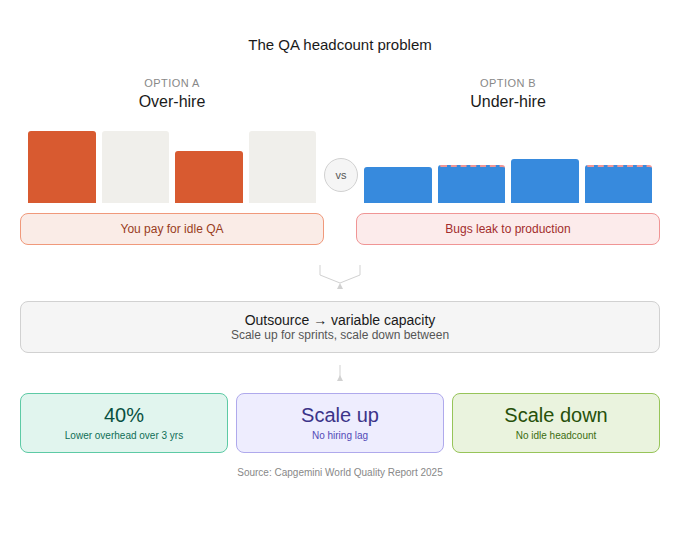

3. You need variable capacity, not fixed headcount

Enterprise teams scaling rapidly face a binary internally: over-hire and carry a bloated QA headcount between sprints, or under-hire and compromise coverage during crunch.

Outsourcing converts that fixed cost into a variable one. Organizations that move to outsourced QA models report reducing operational overhead by 40% over three years (Capgemini, 2025). That’s not a rounding error.

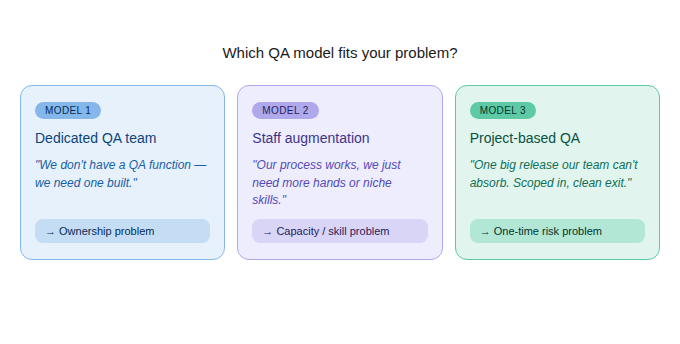

Choosing the Engagement Model (And Why Getting This Wrong Kills the Partnership)

Most outsourcing decisions fail not because the vendor was wrong, but because the model was wrong for the problem. There are three. Pick based on what you’re actually solving.

The honest diagnostic question before choosing: are you solving a capacity problem, a capability problem, or an ownership problem? Picking staff augmentation when you need ownership is the most common mistake. You end up with a partner who executes your broken process more efficiently, and your defect leakage rate doesn’t move.

What Actually Matters in Vendor Selection at Enterprise Scale

Most vendor evaluations measure the wrong things. Cost, turnaround speed, and pitch quality are easy to assess and almost irrelevant to long-term success. Here’s what a CTO-level evaluation should actually cover.

Domain expertise, not just QA expertise

A vendor with deep QA capability but no experience in your vertical spends the first three months learning your risk profile. For fintech, that means understanding PCI-DSS compliance testing. For healthcare SaaS, it means HIPAA boundary testing. For enterprise SaaS platforms, it means load testing under usage patterns that look nothing like synthetic benchmarks.

Also, the most important parameter would be whether they are TMMi Level 5 certified or do they have a testing center of excellence? Like at Kualitatem, being a TMMi Level 5 certified, which means it’s the highest maturity model you can achieve as a quality assurance company. Makes us a suitable candidate for enterprise tasks.

Ask specifically: what production incidents have they prevented for clients in your sector? Not what tests they ran. What failures did they catch before those failures cost someone money?

CI/CD integration capability

With 89% CI/CD adoption across enterprise engineering teams, a QA partner who operates as a separate waterfall-era quality gate is not a partner. They’re a problem. See whether their team can embed directly into your pipeline, instrument tests at the sprint level, and surface results in your existing dashboards without manual handoffs. Ask for a technical walkthrough of how they’ve done this for a client with a similar stack.

Also, check who their leadership team is? Ask for relevant people who would be responsible for your specific project. It’s important to keep a check weather team is ISTQB certified or not as well.

Having partnered with Fortune 500 industry giants, Kualitatem builds its team with highly certified (ISTQB) professionals, ensuring that quality is embedded not only in processes, but in mindset and attitude.

AI testing maturity, not AI testing claims

Nearly 90% of organizations are piloting or deploying AI-augmented testing workflows. Only 15% have achieved enterprise-scale deployment (Capgemini, 2025). The gap between a vendor who demos AI tooling and one who has operationalized it at scale is significant, and that gap seldom shows up in a pitch deck.

Don’t ask if they use AI in testing. Ask for production examples of AI-assisted test case generation, log analysis, and self-healing test scripts in actual client environments. If they can’t name specific client scenarios and outcomes, they’re in the 75%.

For example, at the enterprise level, the Kualitatem team typically tests with Multi-agent testing, Advanced AI testing, automation testing, and quality assurance services engineered to align with current enterprise requirements and integrate directly into complex environments.

Security and compliance posture

Your QA partner will have access to your codebase, test environments, and in some cases, production-adjacent data. Evaluate their security posture the way you would an infrastructure vendor. ISO 27001 and SOC 2 Type II are the baseline. Zero Trust network access for external testers and MFA for all codebase access should be non-negotiable, and you should ask for evidence, not just certification logos.

Escalation structure, mapped before you sign

Most outsourcing failures come from broken communication during incidents, not from bad testing. Before signing, get specific answers to:

- Who owns incident escalation on their side?

- What is the SLA for critical defect notification?

- How disputed priorities get resolved.

A vendor who gives vague answers here during the sales process will give vague answers when a critical bug surfaces at 2 am.

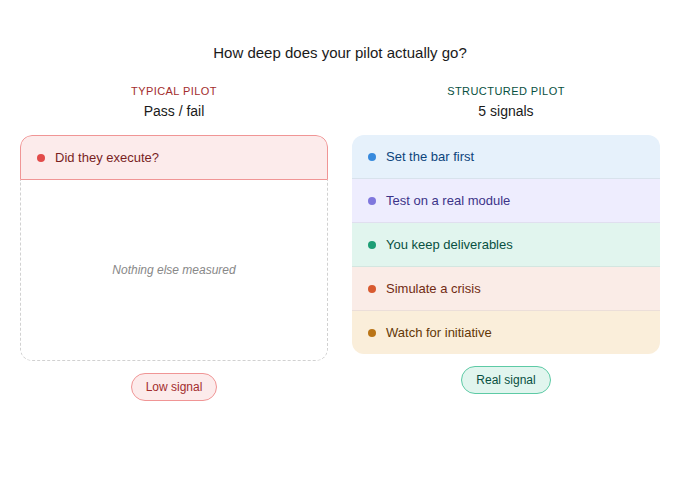

How to Run a Pilot That Actually Tells You Something

A one- to three-month structured pilot before any long-term contract is the right approach. But only if you structure it to give you a real signal, not just a pass/fail on test execution.

• Define success criteria before the pilot starts. What defect leakage rate is acceptable? What test coverage threshold represents adequate protection for your release cadence?

• Run the pilot on a real module with real stakes. Not a throwaway test project. You need to see how the vendor behaves under actual release pressure.

• Require deliverables your team retains. Test suites, documentation, and process artifacts that stay with you regardless of whether the partnership continues.

• Simulate an escalation. Real or staged, you want to see how they communicate when something isn’t going smoothly before you need them to do it under pressure.

• Track their initiative, not just their execution. A strong partner flags risk you didn’t ask them to look for. That’s the behavior that matters in production.

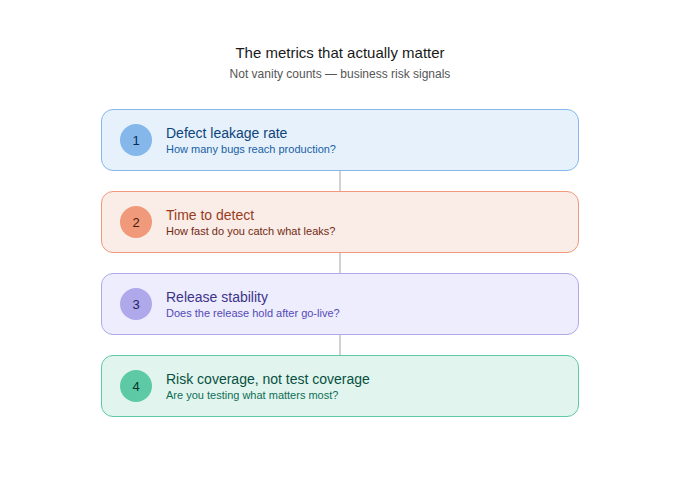

The Metrics That Actually Matter

Once you’re operational, the only metrics worth tracking are the ones that connect testing activity to release risk. Everything else is instrumentation.

Defect leakage rate

The percentage of defects that escape testing and reach production. This is the primary signal. If it’s not declining in the first 90 days, the coverage isn’t reaching the right areas.

Time to detect

How long between a defect being introduced and being caught? In a well-functioning CI/CD-embedded QA setup, this should be hours, not days. If your partner is finding critical bugs during regression cycles rather than during development, they’re not embedded deeply enough.

Release stability

The proportion of releases requiring emergency hotfixes or rollbacks within 48 hours. This is the clearest proxy for whether your QA coverage is actually protecting production.

Risk coverage, not test coverage

Raw coverage percentages are vanity metrics. What matters is whether your highest-risk user journeys, revenue-impacting workflows, and compliance-sensitive paths are covered. Ask your partner to map their coverage to your risk register, not your code surface area. If they can’t do that, they’re measuring effort, not protection.

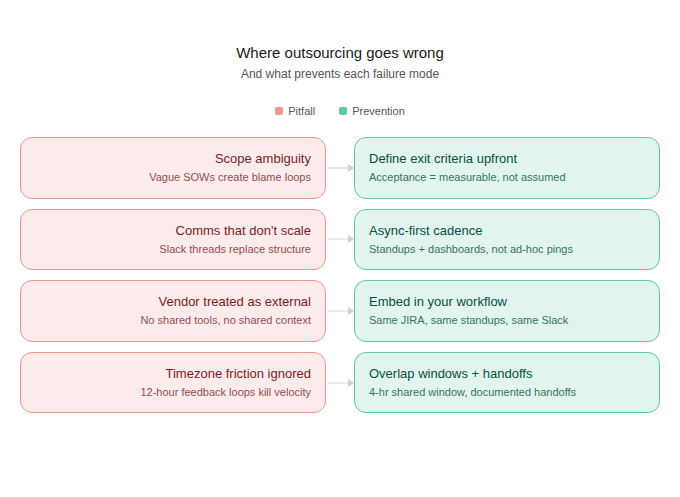

Where Outsourcing Goes Wrong (And How to Prevent It)

Failures cluster around a small set of predictable patterns. Knowing them in advance is the difference between catching a problem at week six and absorbing it at month twelve.

Scope ambiguity

‘You handle QA’ is not a scope. Define exactly which components, environments, releases, and test types are owned by the partner versus your internal team. Gaps are not acceptable. Overlap is fine.

Communication architecture that doesn’t scale

Weekly status emails work until something goes wrong and you need information in two hours. Define the communication protocol for normal operations, elevated risk situations, and critical incidents separately, before work starts.

Treating the vendor as external

Every study on this is consistent: outsourcing partnerships that integrate the external team into engineering standups, sprint reviews, and planning outperform those that operate through formal handoffs. Your partner needs enough context to make good prioritization decisions. Formal reporting doesn’t provide that context. Shared standups do.

Ignoring timezone friction in offshore models

Offshore models offer real cost advantages. Organizations outsourcing offshore report 60 to 70% cost reduction compared to equivalent in-house teams (Capgemini, 2025). But offshore only works if communication friction is actively managed. If your engineering team is in North America or Europe and your QA partner is operating on a 10-hour offset with no overlap window, you’re not running integrated QA. You’re running asynchronous defect reporting with a time delay built in.

What Good Looks Like at 90 Days

A successful outsourcing engagement at the 90-day mark has these characteristics:

• Defect leakage rate is down from baseline. If it hasn’t moved, the coverage isn’t reaching the right areas.

• Test suites are running inside your CI/CD pipeline, not alongside it. Integration should be complete within the first 30 days.

• Your internal team has more capacity for feature development, not less. If engineers are still managing QA handoffs, the operational model needs adjustment.

• You have a shared dashboard and a shared definition of release readiness. If go/no-go decisions are still happening on gut feel, the metrics infrastructure isn’t in place.

• The escalation process has been exercised. You want to know how they communicate before you need them to do it under pressure.

| The 2025 data shows a 30 to 40% faster release cycle and 60 to 70% reduction in quality-related overhead for organizations that outsource QA to mature partners. The same data shows consistent first-year failure for partnerships that select on cost, skip structured pilots, and operate through formal handoffs instead of integration. The difference is not the vendor. It’s the rigor of the decision you make before you sign. |

If you’re not sure whether your QA setup is broken enough to outsource, or broken in the right way for outsourcing to help, that’s the conversation to have before vendor selection starts.

Questions to Ask Before You Sign a QA Company

Most vendor calls follow the same script. They show you a capability deck, walk through a case study you cannot verify, and ask about your timeline. That tells you nothing about what working with them actually looks like.

These seven questions cut through the pitch and get you the answers that actually predict partnership success.

- What bugs have you caught that saved a client real money?

- How does your team plug into our CI/CD pipeline technically, not conceptually?

- Who gets paged at 2 AM if something breaks?

- What do the first 30 days actually look like?

- If we walk away in 90 days, what do we keep?

- What is the most common reason clients leave you?

- Can you show me a live client dashboard right now?

Kualitatem works with enterprise engineering teams to audit current QA operations, identify structural gaps, and define whether outsourcing, augmentation, or internal process improvement is the right fix. The first conversation is a diagnostic, not a pitch.

software testing services, enterprise quality assurance, QA vendor selection, test automation outsourcing