In-House QA vs Outsourced QA: Total Cost Comparison

- May 21, 2026

- Nabeesha Javed

Quality Assurance (QA) is what keeps software reliable and users happy. But when companies tighten their belts, QA budgets often come under the microscope. CTOs and CFOs start asking whether QA headcount and expenses are really justified.

One option is keeping QA in-house; the other is outsourcing it.

Both have real costs:

- Salaries and benefits,

- Tools and training,

- And a host.

This article walks through the trade-offs between in-house QA vs. outsourced QA so leaders can see the full financial picture, not just the headline numbers.

Uncovering In-House QA Costs

1. Salaries and Benefits

The highest cost of an in-house QA team is its people. QA engineer salaries vary by experience and location, but they usually fall between $79,000 and $111,500 per year.

On top of that, benefits like health insurance, retirement contributions, and bonuses can increase payroll costs by another 30%.

For a team of five, total annual personnel costs can easily go beyond $400,000.

2. Tools Expenditure

QA tools get expensive fast. Beyond the base subscription fees, costs can rise with upgrades and extra user seats.

Here is the QA tools expense breakdown:

- Testing software licenses: Automation and QA tooling can range from free tiers to paid subscriptions; for example, Cypress Pro costs $67/month, and some QA software services quote $5,000 to $20,000 per month for more complex automation work.

- Bug tracking platforms: Paid bug-tracking tools often start around $50 to $150/month for basic plans, while more advanced subscriptions can begin around $250/month.

- Test management tools: Pricing varies widely, with options such as TestMonitor starting at $39/month for 3 users, PractiTest starting at $49/month, and qTest starting at $1,000 per user per year.

- Maintenance and upgrades: Ongoing updates, support, and add-ons can increase annual spend by about 10% to 20% beyond the base subscription cost, depending on the vendor and plan structure.

- Additional user licenses: Per-user pricing can add up quickly, with examples ranging from $3/user/month to $95/user/month depending on the platform and feature set.

- Integration costs: Setup and customization for CI/CD and reporting integrations can push one-time implementation costs into the $1,000 to $5,000+ range for teams needing tailored workflows.

For many teams, total QA tooling expenses can still land in the broad $2,000 to $10,000 per month range once multiple subscriptions and integrations are included.

Ready to cut QA costs without cutting quality?

Visit Kualitatem to explore our services and request a customized quote that fits your team and budget ->

3. Training Investments

Keeping QA skills up to date is an ongoing investment, not a one-time cost.

Keeping QA skills sharp doesn’t come free.

From online courses to hands-on workshops, annual training can cost anywhere from $1,000 to $5,000 per employee based on the type of learning and how in-depth it needs to be.

4. Management Overhead Costs

Managing an in-house team adds another layer of expense.

This can account for 15%-20% of the total QA budget, as supervisors allocate time overseeing and managing the QA process.

5. Hidden Costs of In-House QA

Understanding hidden costs is crucial in making an informed budget:

- Attrition: High turnover can cost 1.5 to 2.5 times an employee’s salary due to recruitment and training expenses.

- Ramp-Up Time: It takes new hires 3-6 months to reach full productivity.

- Idle Capacity: Teams often experience periods of underutilization, which represents a sunk cost.

Why Outsourced QA is Becoming the Smarter Choice

1. Outsourcing Fees

Outsourcing QA provides flexibility and expertise.

These typically range from $20,000 to $50,000 monthly, depending on the services included.

Although it may seem higher initially, the comprehensive nature of these services often yields better overall value.

2. Managed QA Services

Outsourcing firms frequently offer managed QA services that cover:

- Test planning

- Automation

- Regression testing

- Defect reporting

- Release validation

Managed QA services make testing easier to run and easier to scale. They help businesses:

- Scale testing as project needs grow

- Reduce the workload on internal teams

- Improve test coverage across releases

- Stay informed through regular reports and performance insights

3. ROI for QA Outsourcing

Investing in outsourced QA can deliver strong value. Outsourcing firms often bring experienced QA professionals and proven processes that can improve test coverage.

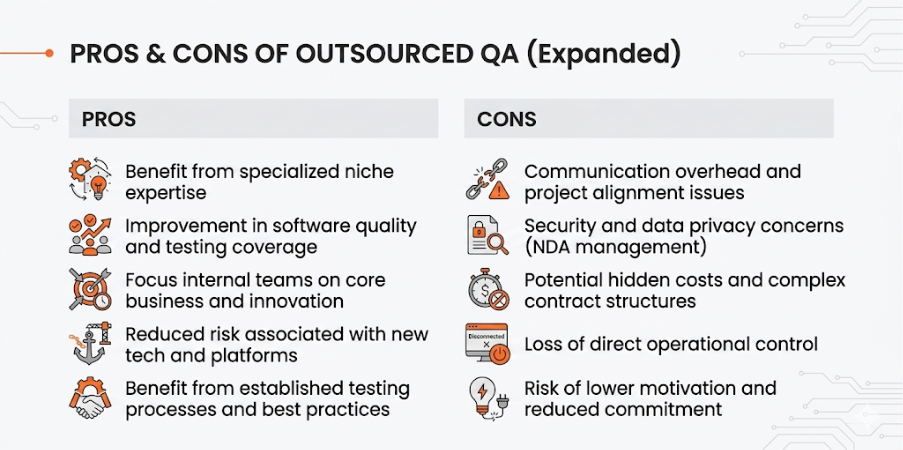

However, outsourcing is not a perfect fit for every organization. Some teams may face challenges with:

- Communication.

- Time zone differences.

- Less direct control over testing priorities.

- Need to invest time in onboarding an external partner.

The best ROI usually comes when the outsourcing model aligns with the company’s goals.

4. Reduced Hidden Costs in Outsourcing

Outsourcing can minimize various hidden costs:

- Lower Attrition Rates: Outsourcing firms often maintain lower turnover, decreasing the frequency and costs of disruption.

- Quick Ramp-Up Times: Outsourcing companies can mobilize resources almost immediately, eliminating long onboarding processes.

- Efficient Resource Management: Outsourcing firms can dynamically adjust their workforce, thus reducing idle time.

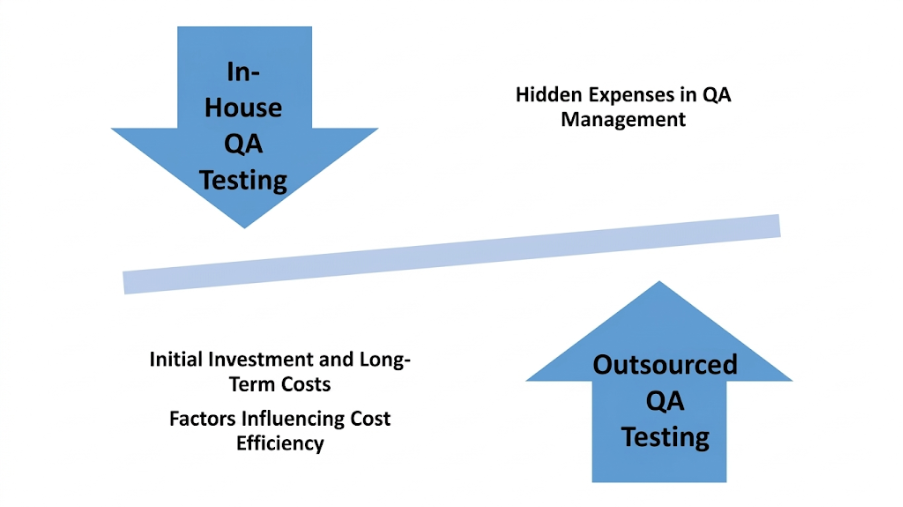

Comparing In-House and Outsourced QA Costs

Comparative Analysis: In-House vs Outsourced QA

Here’s a simple side-by-side comparison of in-house and outsourced QA costs:

| Cost Category | In-House QA | Outsourced QA |

| Salaries & Benefits | $100,000+ per engineer | $20,000 – $50,000 monthly |

| Tools & Technology | $2,000 – $10,000/month | Generally included |

| Training | $1,000 – $5,000/year | Usually covered |

| Management Overhead | 15%-20% of the budget | Included |

| Attrition Costs | High; 1.5-2.5x salary | Lower rates |

| Ramp Time | 3-6 months | Almost immediate |

| Idle Capacity | Often High | Close to zero |

Outsourced QA Benefits That Go Beyond Cost

When figuring out the total cost of QA ownership, it’s vital to consider factors beyond immediate expenses.

Here’s why many companies see more long-term value in outsourcing:

1. It helps keep costs under control

When you outsource QA, you can avoid many of the extra costs that come with hiring and training. Over time, that can make a real difference to your budget.

2. You get experienced testers from day one

Outsourcing also gives you access to skilled QA professionals who know how to spot issues early and help deliver a smoother experience for your users.

3. Your internal team can stay focused

Instead of spending time on day-to-day testing tasks, your internal team can focus on bigger priorities like product strategy, development, and growth.

4. It’s easier to scale when needs change

Outsourcing makes it much easier to ramp testing up or down based on your project needs, without the pressure of building and maintaining a larger full-time team.

Outsourced QA can be a smart way to improve testing, but the challenges it comes with require every team to think through before making the move.

The Hybrid Model: Best of Both Worlds

Most mature engineering organizations land on a hybrid model. Here is the structure that works:

In-house core (2-3 people):

- QA Lead / Manager: Owns QA strategy, standards, and test plans. Deep product knowledge. Manages both in-house and outsourced testers.

- 1-2 Senior QA Engineers: Handle the most complex, domain-specific testing. Own the automation framework architecture. Serve as knowledge anchors.

Outsourced capacity (flexible):

- 2-5 QA Engineers: Execute test plans, develop automated tests, and perform regression. Scale up for releases, scale down between sprints.

- Specialized roles as needed: Performance tester for quarterly load tests, security tester for annual penetration testing, mobile specialist for app releases.

This model gives you institutional knowledge (in-house core), scalable capacity (outsourced team), and specialized skills on demand (outsourced specialists).

Risk Comparison

| Risk | In-House | Outsourced | Hybrid |

| Key person dependency | High | Low (replacement guarantee) | Medium |

| Knowledge loss on turnover | High | Medium (documentation culture) | Low |

| Scaling speed | Slow (months) | Fast (weeks) | Fast |

| Domain knowledge depth | High | Medium (grows over time) | High |

| Cost overrun risk | Medium (hidden costs) | Low (predictable hourly) | Low |

| Quality consistency | High | Depends on partner | High |

With Kualitatem’s 94% client retention rate, the usual outsourcing concern of frequent turnover is significantly reduced, helping QA teams maintain continuity and build deeper product knowledge over time.

Final Thoughts

Choosing between in-house and outsourced QA comes down to understanding the full cost picture, including both the obvious expenses and the hidden ones.

While outsourcing may not always present lower initial costs, the potential savings in hidden costs can provide significant long-term value.

See how we helped a leading ANZ online retailer reduce their ramp-up time to zero in our case study. Performance And Business Process Stability Testing – Kualitatem

If you want to take a closer look at your QA needs, visit Kualitatem and connect with our experts to find the approach that best fits your team.