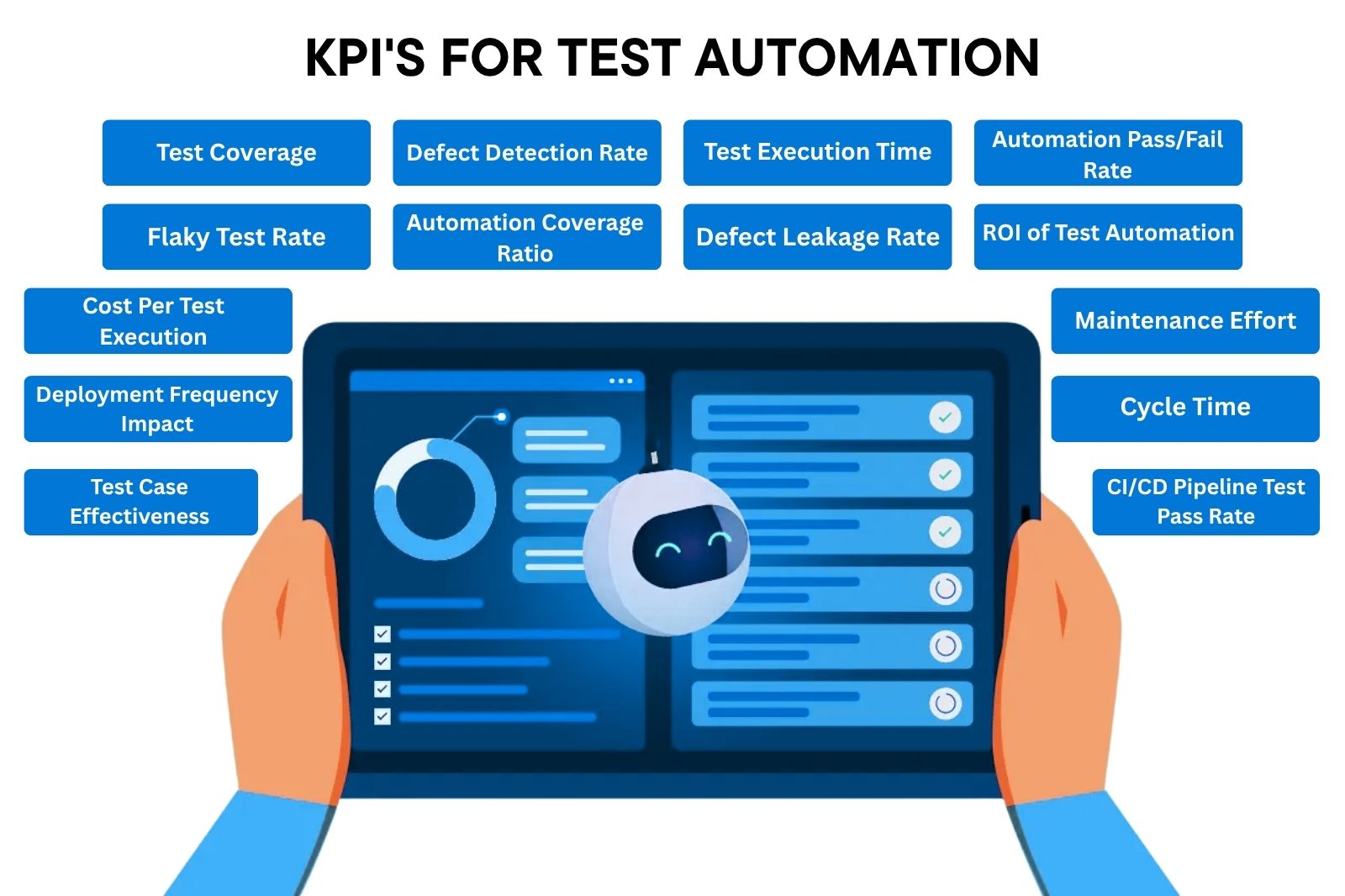

15 Key Performance Indicators for Test Automation

- April 10, 2026

- Nabeesha Javed

As a technology leader, when you walk into a quarterly review with 14 dashboards, 5,000 automated test cases, and a 92% pass rate.

You never end up with any descion. Because your numbers are too techy for business people. What your C-suite really cares about is:

- Shipping speed

- Production stability

- Cost per release

- Incident reduction

If you don’t say what they wanna hear, your automation program might not get sufficient funds

Track all 15 KPIs in one place. We built a free metric tracker so you can stop guessing and start measuring what the board actually cares about.

Download Metric Tracker →

This article translates the 15 most important test automation metrics into that language for you.

For each metric, you will find how to calculate it, what the benchmark looks like in practice, where the business risk lives if you ignore it, and how long it realistically takes to achieve a healthy baseline.

But first, let’s understand what Key Performance Indicators are for Automation Testing and why they are so important.

Why Automated Test Success Rate KPI Matters

Stakeholders want to know if testing justifies the budget, supports release decisions, and delivers real ROI. Four business outcomes make automation metrics worth tracking:

Release visibility: Know if a build is safe to ship, backed by data not gut feeling.

Risk reduction: Catch issues before customers, regulators, or the press do.

Cost control: See whether your cost of quality is trending in the right direction.

Speed assurance: Confirm automation is actually accelerating delivery, not just running alongside it.

Metrics vs KPIs: Difference

A metric is a number. A KPI is a number tied to a business goal with a target and a consequence.

Test execution count is a metric.

“Maintain a CI/CD pipeline pass rate above 90% to support weekly releases” is a KPI.

KPIs create accountability. Metrics get noted and forgotten. When a threshold is tied to a business outcome, people act.

The Business Value of Getting This Right

Teams that track the right metrics, surface signal over noise, and align QA data with business goals share one thing in common: they make faster, smarter decisions.

15 Test Automation Metrics (KPIs) That Drive Business Decisions

1. Test Coverage

Test coverage tells you what percentage of your application your automated tests actually exercise.

For executives, the real question is whether the right areas are covered.

A banking platform with 80% coverage that excludes payment processing is not 80% safe. It is 100% exposed where it matters most.

Coverage gaps discovered in production do not stay in QA reports. They show up in incident logs, SLA breaches, and customer complaints.

Risk-based coverage, prioritizing business-critical flows, gives leadership a defensible answer when auditors or the board ask how quality is being ensured.

KPI Formula

(Automated Test Cases / Total Testable Requirements) × 100 = Test Coverage

Benchmark

70–85% for mature teams; below 60% is a red flag for regulated industries.

Time to Achieve

Baseline within 30 days; 70%+ achievable in 3–6 months with a structured automation program

2. Defect Detection Rate

This metric tracks how many defects your automation suite catches before they reach production. It is one of the most direct indicators of whether QA is delivering real business protection or just running scripts.

A low defect detection rate before release means higher costs after. For enterprise software, that ratio climbs further when you factor in customer impact, rollback overhead, and regulatory scrutiny.

KPI Formula

(Defects Found in Testing / Total Defects Found) × 100 = Defect Detection Rate

Benchmark

Best-in-class teams detect 85–95% of defects pre-production

Business Risk

Defects escaping to production damage trust, increase support costs and in regulated environments, can trigger audit findings.

Time to Achieve

Measurable from day one; improvement tracked sprint over sprint

3. Test Execution Time

Every hour your test suite takes to run is an hour your engineering team waits to deploy. At scale, slow test suites force teams to either delay releases or cut testing, neither is acceptable when speed and quality both matter.

For organizations running continuous delivery pipelines, test execution time directly affects deployment frequency. Teams that move from 4-hour suites to 30-minute suites do not just ship faster; they ship more often with faster rollback capability when something goes wrong.

KPI Formula

Total wall-clock time from test run trigger to results report

Benchmark

Under 10 minutes for unit/integration; under 30 minutes for full regression in a CI/CD pipeline

Business Risk

Slow suites create a bypass culture, developers skip or shortcut testing to meet deadlines, increasing production risk.

Time to Achieve

Parallelization and infrastructure upgrades can cut execution time 50–70% within 60–90 days

4. Test Automation Pass/Fail Rate

Pass rate is often misread as a quality signal. A 95% pass rate means nothing if 30% of those passes are false positives from poorly written tests.

What matters is whether the failures flagged are real, actionable issues, and whether the passes are genuine assurance.

❓Executives should ask: when the pass rate drops, does the team know immediately, and does it block release?

Teams with strong governance treat any unexpected pass rate drop as an escalation trigger, not just a metric to note in the next weekly report.

KPI Formula

Test Automation Pass Rate Formula

Pass Rate (%) = Number of Passed Tests/Total Executed Tests ×100

Pass Rate (%)= Total Executed Tests/ Number of Passed Tests ×100

The same goes for fail rates

🧠 Example

Total tests executed = 100

Passed = 85

Failed = 15

Pass Rate = 85%

Fail Rate = 15%

Benchmark

Stable mature suites: 90–98% pass rate on clean builds

Business Risk

False passes create false confidence. False fails create alert fatigue and get ignored, the same problem, opposite direction.

Time to Achieve

Meaningful signal within 2–4 weeks of consistent test execution

5. Flaky Test Rate

A flaky test is one that sometimes passes and sometimes fails without any code change. Individually they look harmless. Collectively, they are the number one reason engineering teams lose trust in their test suites, and stop acting on failures.

Google’s engineering team documented that flaky tests at scale created a “false signal“ problem that took significant effort to remediate. For enterprise teams, flakiness above 5% means your test suite is actively misleading the people making release decisions.

KPI Formula

(Tests That Produce Inconsistent Results / Total Tests) × 100 = Flaky Test Rate

Benchmark

Under 2% is the industry standard; above 5% requires immediate attention

Business Risk

High flakiness causes engineers to re-run tests rather than investigate failures, masking real defects.

Time to Achieve

Detection is immediate with proper logging; remediation takes 4–8 weeks of dedicated cleanup

6. Automation Coverage Ratio

This is distinct from test coverage. Automation coverage ratio answers how much of your existing test library has been converted from manual to automated execution. It is the key signal for maturity of your automation investment and its scalability.

Manual testing does not scale with release velocity. A team running 500 manual regression tests on a two-week sprint cycle cannot realistically maintain that cadence as the product grows. Automation coverage ratio tracks whether you are building a sustainable quality operation or one that will require headcount every time the product expands.

KPI Formula

(Automated Test Cases / Total Test Cases) × 100

Benchmark

40–60% is a reasonable mid-maturity target; 70%+ for high-velocity teams

Business Risk

Low automation coverage creates a manual testing bottleneck that caps release frequency regardless of engineering speed.

Time to Achieve

Meaningful ratios achievable within 6 months with a dedicated automation program

7. Defect Leakage Rate

Defect leakage measures how many defects escape your QA process and reach users. The board sees it in SLA reports; customer success sees it in support tickets.

In enterprise software, a single high-severity defect reaching production can cost more to resolve than an entire month of QA investment. For fintech and banking clients, leaked defects carry regulatory weight on top of the operational cost.

KPI Formula

(Defects Found in Production / Total Defects Found) × 100 = Defect Leakage

Benchmark

Under 5% for mature QA programs; under 2% for mission-critical systems

Business Risk

High leakage erodes user trust, triggers SLA penalties, and in regulated industries, creates audit exposure.

Time to Achieve

Tracked continuously; reduction of 30–50% achievable in one quarter with targeted automation improvements

8. ROI of Test Automation

This is the metric that justifies every automation investment conversation with leadership. The ROI calculation compares the total cost of building and maintaining automation against the cost savings from reduced manual testing, faster defect detection, and fewer production incidents. Most organizations that measure this properly find positive ROI within 12–18 months of a structured automation program.

KPI Formula

((Cost Savings from Automation − Cost of Automation) / Cost of Automation) × 100

Benchmark

Positive ROI typically within 12 months; 200–400% ROI over 3 years is achievable for large-scale programs

Business Risk

Without measuring ROI, automation spend looks like overhead. With it, it becomes a documented competitive advantage.

Time to Achieve

Initial ROI data available within 6 months; full ROI picture clear at 12–18 months

9. Cost Per Test Execution

As test suites scale, execution costs scale with them, including cloud compute, infrastructure, licensing, and engineering time. Cost per test execution brings discipline to that growth, ensuring automation remains economically sound as it expands.

Teams that optimize this metric do not just save money; they make the business case for running tests more frequently. When each execution costs less, you can run tests on every commit rather than just nightly, catching issues earlier when they are cheapest to fix.

KPI Formula

Total Test Execution Cost / Number of Tests Executed (per period)

Benchmark

Highly variable by stack; aim for 20–30% cost reduction year-over-year as suites scale

Business Risk

Unmanaged execution costs can make automation economically unsustainable, leading to scaled-back testing frequency.

Time to Achieve

Baseline measurable immediately; optimization within 30–60 days of cost analysis

10. Maintenance Effort

Test maintenance is the hidden cost of automation. Poorly structured test suites require constant upkeep every time the application changes, and if maintenance consumes more engineer time than the tests save, the automation investment becomes a net loss.

Best practice benchmarks suggest that maintenance should consume no more than 20–25% of total QA engineering time. Teams exceeding 40% are effectively working for their test suite rather than using it to work for them.

KPI Formula

Maintenance hours per sprint / Total QA engineering hours per sprint

Benchmark

Under 20% of QA time on maintenance; above 35% indicates architectural problems in the test suite

Business Risk

High maintenance burden slows automation growth and makes QA a bottleneck rather than an accelerator.

Time to Achieve

Measurable within one sprint; structural improvements take 2–3 months to implement

11. Deployment Frequency Impact

DORA research consistently shows that high-performing engineering organizations deploy more frequently and recover from failures faster. Test automation is a core enabler of that capability. This metric tracks whether your automation investment is actually moving the needle on deployment velocity.

The correlation is direct: teams with high-confidence automated suites deploy 2–5x more frequently than teams without. For SaaS organizations, deployment frequency maps directly to feature delivery speed and competitive responsiveness.

KPI Formula

Deployment frequency (before automation) vs. deployment frequency (after automation) — expressed as ratio or absolute change

Benchmark

Elite DORA performers deploy on demand or multiple times per day; high performers deploy daily to weekly

Business Risk

No improvement in deployment frequency after automation investment suggests the suite is not integrated into the CI/CD pipeline.

Time to Achieve

Measurable impact within 60–90 days of CI/CD pipeline integration

12. Mean Time to Detect (MTTD)

MTTD measures the lag between when a defect is introduced and when your automation catches it. Shorter MTTD means cheaper fixes, faster feedback loops, and less developer context-switching to diagnose issues days after writing the code.

Code that passes review but fails in QA three days later requires the developer to rebuild mental context around work they considered done.

Teams with MTTD under one hour operate fundamentally differently from teams where defects surface in the next day’s test run.

KPI Formula

Average time from defect introduction to test failure detection

Benchmark

Under 1 hour for teams with shift-left testing; under 24 hours for most CI/CD setups

Business Risk

Long MTTD means defects accumulate before discovery, making root cause analysis harder and remediation more expensive.

Time to Achieve

Baseline measurable immediately, shift-left improvements achievable within 30–60 days

13. Cycle Time

Cycle time measures how long it takes from code commit to production deployment. Test automation is not the only variable, but it is often the largest bottleneck. When automation runs fast and reliably, cycle time compresses. When it does not, it becomes the ceiling on delivery speed.

For product-led organizations, cycle time is a competitive metric.

The teams that can take a customer-reported issue from report to fix in hours rather than days have a structural advantage in retention and satisfaction.

KPI Formula

Time from code commit to production deployment (average per release)

Benchmark

Elite DORA teams: under 1 hour; high performers: 1 day to 1 week

Business Risk

High cycle time creates release pressure that leads to skipping testing steps — the opposite of what automation should enable.

Time to Achieve

Improvement visible within 30–45 days of pipeline optimization

14. Test Case Effectiveness

Not all tests are created equal. Test case effectiveness measures whether your tests are actually finding defects or just confirming that things already known to work still work. It also helps prioritize which tests to maintain and which to retire, a useful filter as suites grow.

KPI Formula

(Number of Defects Found by a Test / Total Executions of That Test) = Test Case Effectiveness

ranked and analyzed

Benchmark

No universal benchmark; focus on identifying the bottom 20% of tests that find zero defects over 90 days

Business Risk

Low-effectiveness tests waste compute and engineer time while providing no incremental quality signal.

Time to Achieve

Analysis possible within one quarter of tracked test execution data

15. CI/CD Pipeline Test Pass Rate

This is the metric your DevOps and engineering leadership watches most closely. Pipeline pass rate determines whether code can move forward or gets blocked, making it the frontline indicator of build health and automation reliability.

KPI Formula

(Builds Passing All Automated Tests / Total Builds) × 100 — tracked per pipeline stage

Benchmark

85–95% for stable teams; consistently below 80% indicates suite or infrastructure problems

Business Risk

Low pipeline pass rate breaks developer trust in automation and creates a manual override culture that defeats the purpose of CI/CD.

Time to Achieve

Measurable from day one of pipeline integration; stabilization within 30–60 days

How to Gather and Analyze These Metrics

The data behind these metrics lives in a few places: your CI/CD pipeline (Jenkins, GitHub Actions, GitLab CI), your test management platform, and your observability stack.

Most teams already have the raw data. The gap is usually in aggregating it into a coherent picture that engineering, QA, and business leadership can all read.

Where the Data Lives

• CI/CD pipelines: Execution time, pass/fail rates, pipeline health, deployment frequency

• Test management tools, coverage, defect detection, test case effectiveness, maintenance tracking

• Incident and support systems: Defect leakage, MTTD, production impact

• Cloud cost platforms: Execution cost, infrastructure overhead

Making Sense of It

Real-time dashboards that pull from these sources give QA leads and engineering managers a current-state view. BI tools layered on top support trend analysis, which is where the executive narrative comes from. Showing that defect leakage dropped 40% over two quarters, while test execution time halved, tells a story that a static number cannot.

AI-assisted QA analytics platforms like Kualitee are moving this further, surfacing anomalies, predicting coverage gaps, and generating reports in formats that translate directly into executive briefings.

Common Pitfalls in Tracking Automation Metrics

Tracking Vanity Metrics

Test count and execution count are the most common offenders. A suite running 10,000 tests sounds impressive. If 3,000 of them are duplicates and 2,000 are flaky, the number means nothing. Focus on metrics with a direct line to business outcomes.

Poor Data Quality

Metrics are only as good as the data feeding them. Test results that are not logged consistently, defect records that are not categorized properly, and pipeline runs that are not tagged to specific releases all degrade your ability to draw conclusions.

Metrics Without Context

A 70% pass rate on a brand-new suite in active development reads differently than 70% on a stable suite that was at 95% last quarter. The number alone does not tell you whether to act. Trend data and business context do.

The fix is straightforward in principle: track fewer metrics more rigorously, tie them to explicit business objectives, and automate the reporting so the data stays current rather than becoming a monthly manual exercise.

Platforms That Support Metrics and Analytics in 2025

The tools available for automation metrics have matured significantly. Three capabilities matter most from a business perspective: real-time visibility, CI/CD integration, and reporting that translates QA data into language leadership can act on.

• Kualitee: AI-powered test management with built-in analytics, CI/CD integration, and executive reporting that maps test data to business outcomes. Designed for teams that need both QA depth and stakeholder visibility.

• Jira + Xray: Strong for Agile teams already in the Atlassian ecosystem; coverage and defect tracking integrated with project management.

• TestRail: Structured test management with solid reporting; works well for compliance-heavy environments that need audit trails alongside metrics.

Conclusion

Metrics are not the goal. The goal is reliable software, confident releases, and a quality engineering program that earns its investment. Metrics are how you know whether you are getting there.

The 15 metrics in this article give leadership a framework to ask the right questions — not just “is QA testing everything?” but “where are we exposed, what is it costing, and how fast are we moving compared to where we need to be?”

Teams that track these metrics rigorously tend to find something counterintuitive: better measurement does not make QA more bureaucratic. It makes it faster, because decisions get made with data rather than waiting for the next incident to surface what the metrics already knew.

If your current QA reporting does not answer those questions today, that gap is worth closing before the board asks and you do not have the data to respond.

Ready to Turn Your QA Metrics Into Business Insights?

See how Kualitee brings all 15 of these metrics into one dashboard, with AI-driven reporting built for engineering leadership.

→ Book a Demo | Bookmark to keep this Metrics Checklist

Tags: KPI for Automation Testing Explained, Top Test Automation KPI Metrics, Test Automation Adoption KPI, Automated Test Success Rate KPI, KPI for Automation Testers