How to Reduce QA Costs Without Cutting Coverage

- April 3, 2026

- Nabeesha Javed

Testing is seen as a cost, not a profit centre. This has not changed in most companies over the last 30 years. Until, if ever it does, there will always be pressure to reduce QA costs of the test.

If your QA budget went up 20% last year. Defect leakage didn’t go down. Release velocity didn’t improve. And the board is asking why engineering spend keeps climbing without visible returns.

Sound familiar? You’re not alone. Most enterprise engineering leaders face the same paradox: the more they invest in quality assurance, the less efficient the function becomes. Not because they’re doing it wrong. Because they’re doing it the same way they did five years ago, while everything around it has changed.

The Cost Problem Isn’t What You Think It Is

Here’s the number that should bother you: IBM’s Systems Sciences Institute found that a defect caught in production costs 6x more to resolve than one caught during implementation, and up to 100x more than one caught during requirements. That’s not a testing problem. That’s a timing problem.

Most enterprises aren’t overspending on quality programs. They’re spending in the wrong sequence. Heavy investment in end-to-end regression suites that run late in the cycle. Manual verification on features that should have been validated two sprints ago. Automated scripts cover stable functionality while new, high-risk code ships with minimal coverage.

The World Quality Report 2024-25 by Capgemini reports that organisations with mature test optimisation practices spend 23% less on defect remediation than those using traditional validation models. The difference is allocation.

Why “Just Automate More” Stopped Working

For the last decade, the default answer to rising QA spend has been automation. And it worked, for a while. But the economics of automation shift once your suite hits a certain scale.

Maintaining 10,000 automated scripts costs real money. Triaging flaky results eats engineering hours. Expanding coverage to 2,000+ device and browser configurations multiplies infrastructure overhead. The automation that was supposed to reduce cost becomes a cost center of its own, and nobody wants to be the person who says “our test suite is slowing us down” in a leadership meeting.

The real shift isn’t from manual to automated. It’s from reactive coverage to intelligent allocation. The question isn’t “how many scripts do we run?” It’s “are we validating the right things at the right moment in the pipeline?”

Three Structural Practices for Reducing QA costs That Actually Bend the Cost Curve

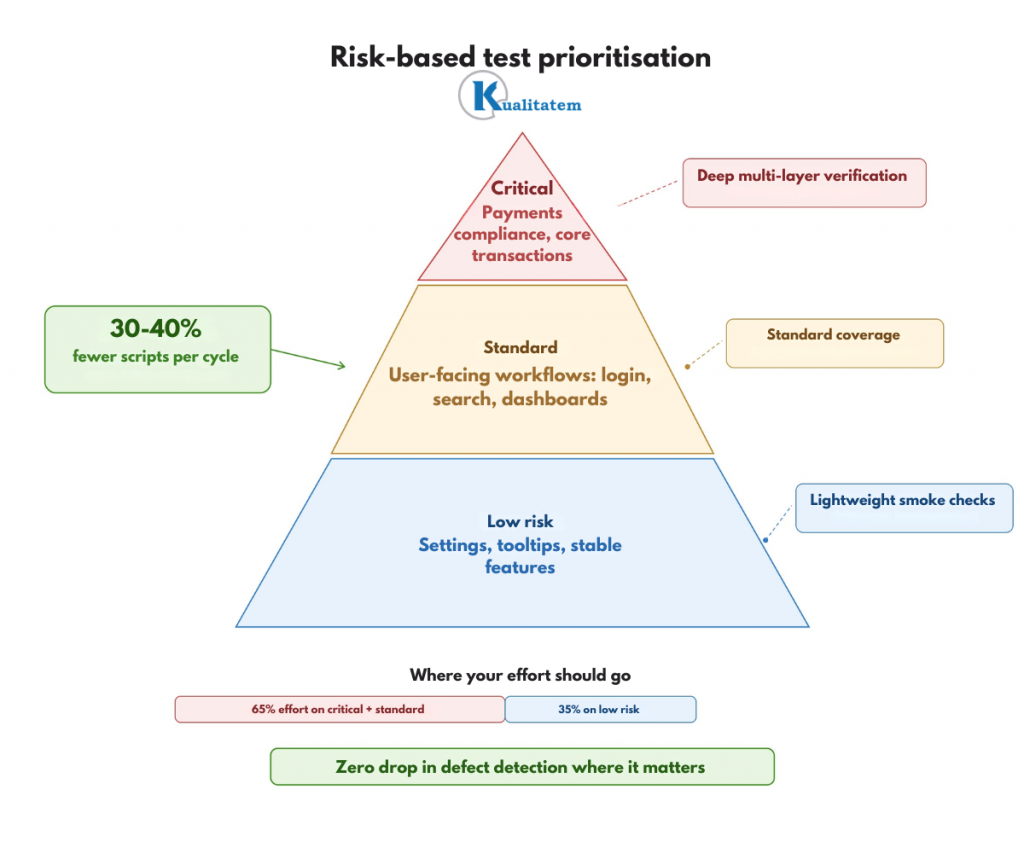

1. Risk-Based Prioritisation Over Flat Coverage

Not every feature carries equal business risk. A payments module in a FinTech app and a settings page tooltip do not deserve the same validation depth. Yet most enterprise suites treat them identically.

Risk-based prioritisation means mapping every test case to its business impact, user frequency, and failure consequence. High-transaction workflows get deep, multi-layer verification. Hence, low-risk, stable functionality gets lightweight smoke checks. The result: 30-40% fewer scripts running per cycle with zero reduction in defect detection where it matters.

This isn’t about cutting corners. It’s about redirecting engineering effort from mechanical repetition to targeted validation. Your compliance-critical paths get more scrutiny, not less. Everything else gets right-sized.

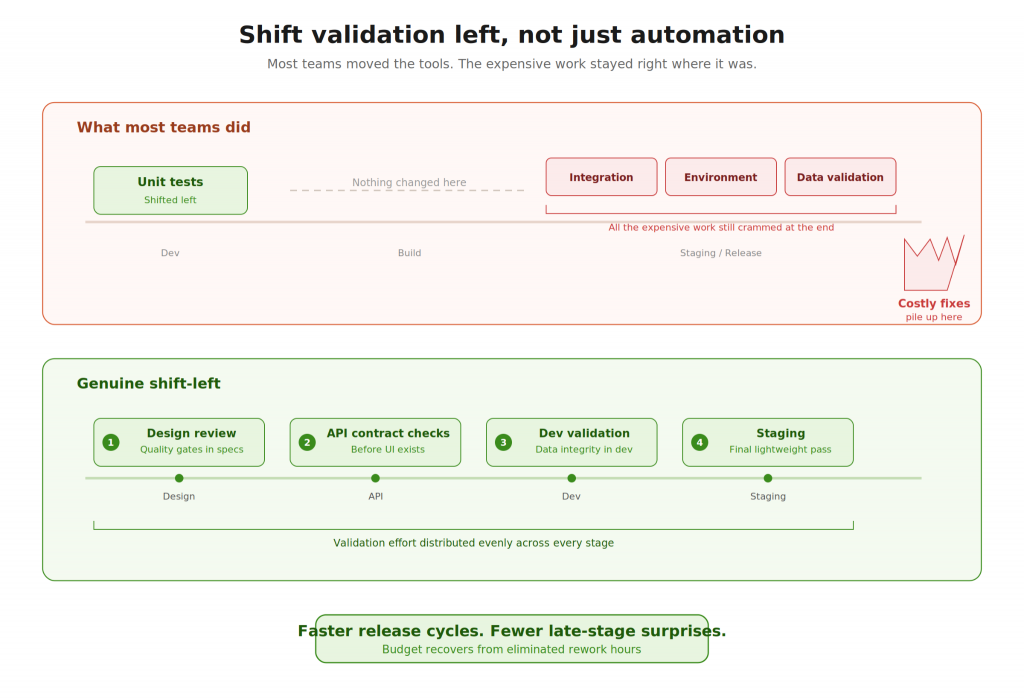

2. Shift Validation Left, Not Just Automation

“Shift-left” has become an industry cliché. But most organisations only shifted the tools left. They gave developers access to unit test frameworks and called it done. The expensive work, integration verification, environment provisioning, and data management still sits at the end of the pipeline, exactly where it’s most costly to find problems.

Genuine early-stage validation means embedding quality gates into design reviews, running contract checks at the API level before a UI exists, and catching data integrity issues during development rather than in staging. Organisations practising continuous validation at every pipeline stage report up to 50% faster release cycles according to DORA’s Accelerate State of DevOps findings. Faster cycles mean fewer late-stage rework hours. That’s where the budget recovers.

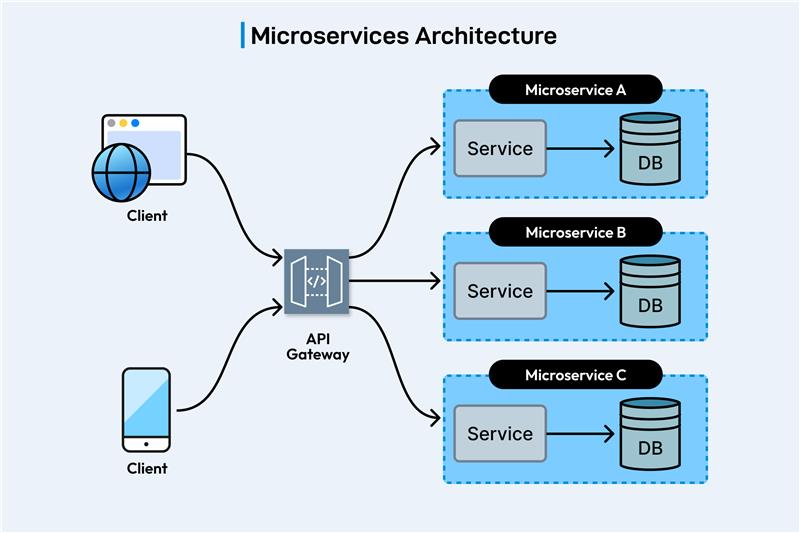

3. Consolidate the Vendor Stack and Specialise

Here’s a pattern we see in almost every enterprise engagement. Three different automation platforms, two performance tools, a separate accessibility solution, and a manual team stitching it all together with spreadsheets. Each tool has its own license, its own learning curve, and its own maintenance burden. Although combined, they cost more than a unified, purpose-built program ever would.

Consolidation doesn’t mean picking one tool and forcing everything through it. It means choosing a partner with the breadth to cover functional, performance, security, and compliance validation under a single operational model. One reporting structure. One escalation path. One team accountable for outcomes, not activities.

The economics are straightforward: tool license overlap alone typically accounts for 15-20% of wasted QA spend in enterprises running three or more platforms. That’s why eliminating that redundancy funds the specialized capability you actually need.

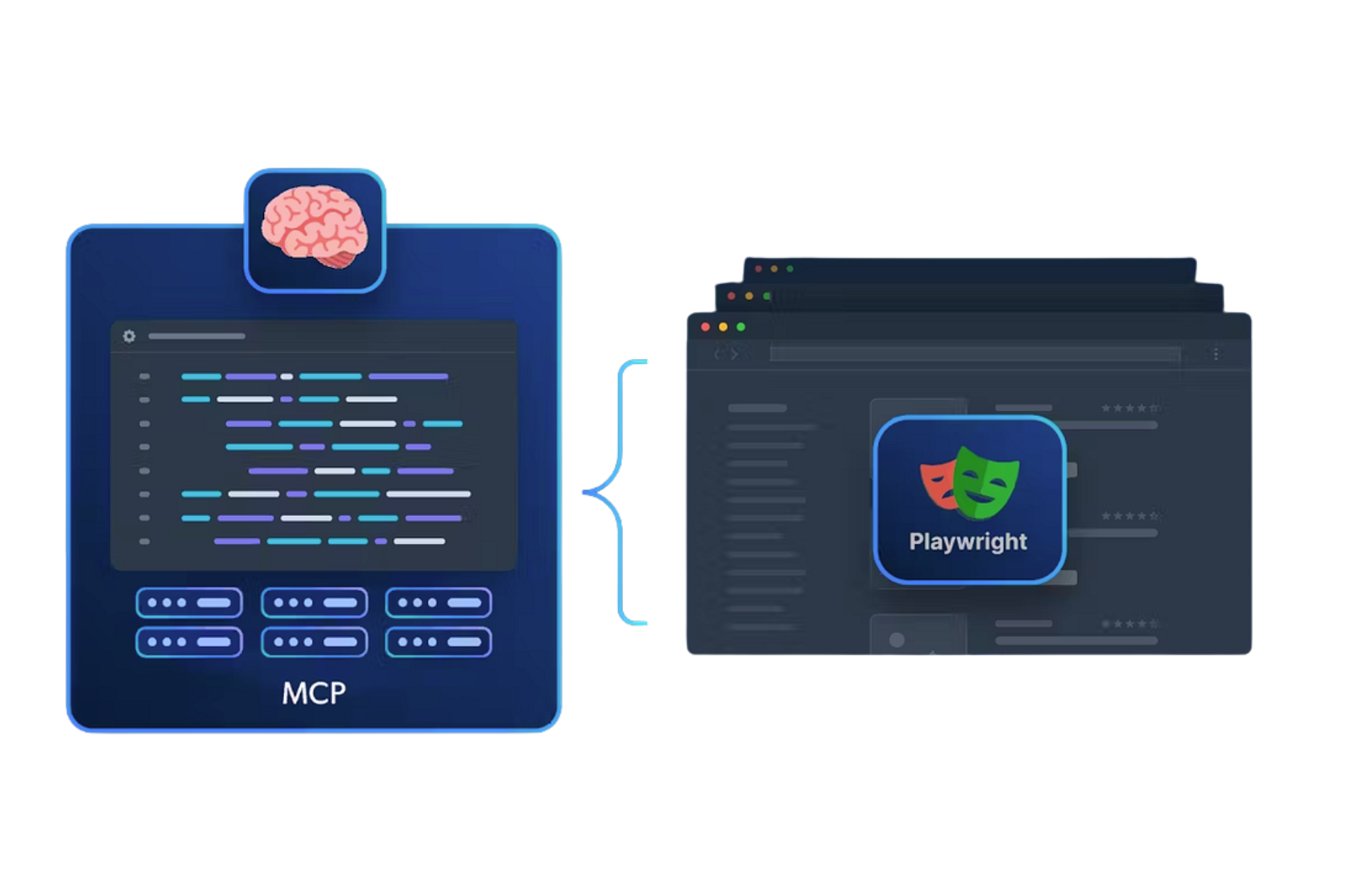

How to Reduce QA costs with AI in software testing

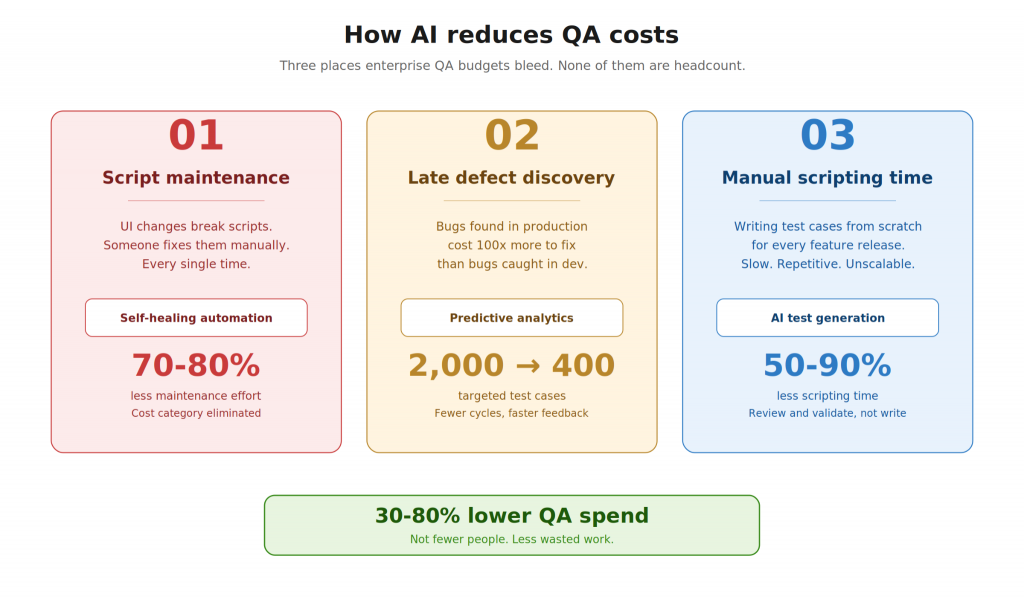

AI doesn’t reduce QA costs by replacing your team. It lessens costs by eliminating the work that should never reach your team in the first place.

Most enterprise QA budgets bleed in three places. None of them are headcount.

The first is maintenance

Every UI change, every locator shift, every minor frontend update triggers a cascade of broken scripts someone has to fix manually. Self-healing automation tools detect these changes and auto-repair scripts without human intervention, cutting maintenance effort by 70-80%. Moreover, that is not a productivity gain. That is an entire cost category nearly disappearing from your balance sheet.

The second is late discovery

A defect caught in production costs roughly 100x what it costs during development. That’s why predictive analytics trained on code change history and past defect patterns flag high-risk modules before a test cycle even begins. So, instead of running 2,000 test cases, hoping critical bugs surface, your team runs 400 targeted cases against the areas most likely to fail. Fewer cycles, faster feedback, lower remediation cost.

The third is scripting time

Writing test cases from scratch for every feature release is slow, repetitive, and does not scale with your roadmap. AI-powered generation tools now create cases directly from requirements documents, design files, or UI scans, reducing scripting time by 50-90%. Your team reviews and validates instead of writing from zero. Hence, coverage goes up. Time-to-release goes down.

Moreover, Enterprises applying all three report 30-80% reductions in overall QA spend. Not because they removed people from the equation. Because they stopped paying skilled engineers to do work that machines handle faster and more reliably.

Enterprises applying all three, self-healing, predictive prioritization, and auto-generation, report 30-80% reductions in overall QA spend. Not because they cut people. Because they stopped paying people to do work that machines handle better.

Best tools for reducing QA costs

Here are the tools you can look up to reduce QA costs.

| Tool Category | Example Tools | Starting Price | Key Savings Mechanism |

| Test Management | Kualitee | $12/user/mo | Streamlined workflows kualitee |

| AI Self-Healing | Mabl, testRigor | $900/mo (free tiers) | 80% less maintenance kualitatem+1 |

| Cloud Platforms | BrowserStack, LambdaTest | $189/mo or pay-per-use | On-demand scaling thectoclub+1 |

| Open-Source | Selenium, Cypress, Appium | Free | No licenses; community support shiftasia+1 |

What This Looks Like in Practice

Kualitatem has spent 16 years building these programs for Fortune 500 enterprises across banking, government, SaaS, and healthcare. Furthermore, with 250+ ISTQB-certified specialists and TMMi Level 5 process maturity, we’ve restructured quality functions that were burning budget without delivering proportional results. The average outcome: 300% ROI from the same or reduced QA costs. Not by cutting coverage. By reorganising where, when, and how it’s deployed.

Make the Business Case, Not the Apology

If you’re heading into a budget conversation where someone’s going to ask why quality spend keeps climbing, you need a restructuring plan, not a cost-cutting plan. The former protects your releases. The latter just creates a different kind of risk.

We’ve helped engineering leaders reframe that conversation with hard numbers. Let us show you what the restructured model looks like for your stack.

Talk to Kualitatem →