How Will the Rise of DevOps Impact Software Testing

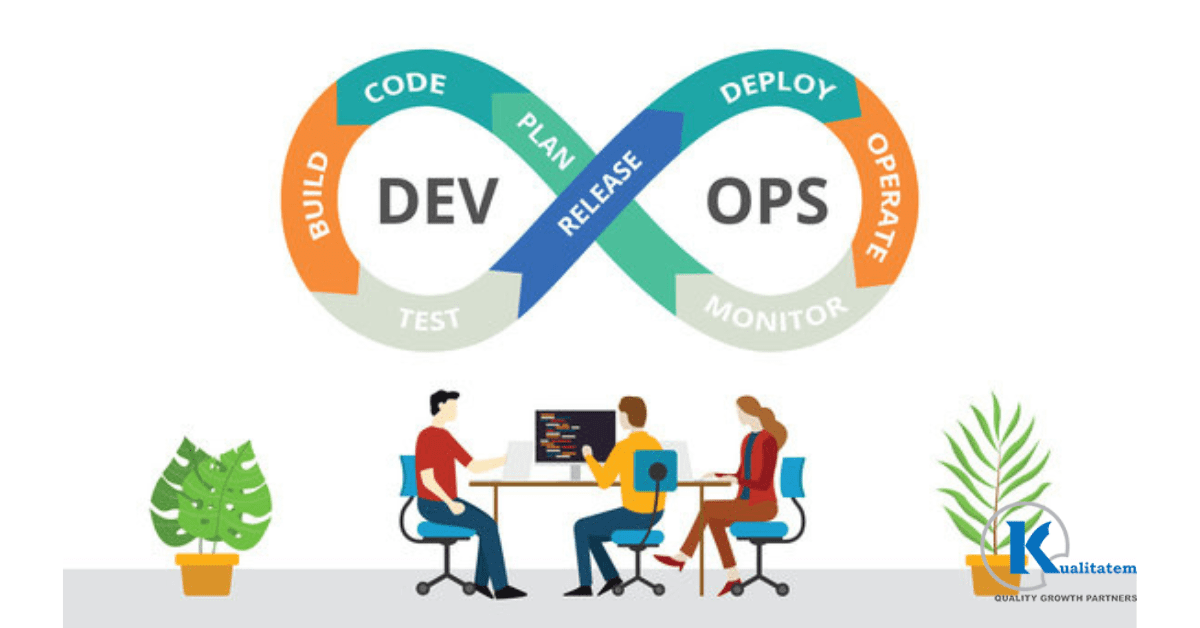

The emergence of DevOps has revolutionized software development and operations by bringing together teams from both disciplines to collaborate and streamline the delivery of products. It has become an increasingly popular approach for improving the quality and speed of development. It combines the principles of agile development, continuous integration, and continuous delivery to automate the process and reduce the time to market.

In this article, we will explore how the rise of DevOps is impacting testing and how organizations can adapt to stay ahead of the curve. We will examine the benefits of using DevOps, the challenges that organizations may face, and provide some practical tips on how to implement DevOps effectively.

What is DevOps

It places a strong emphasis on teamwork, communication, and automation between the development and operations teams. It aims to create a culture of continuous delivery and improvement by breaking down silos and encouraging the sharing of knowledge and responsibilities.

DevOps combines the principles of agile development, continuous integration, and continuous delivery to streamline the development process from coding to deployment. It involves using tools and technologies for automation , deployment, and monitoring of applications, which helps to reduce errors, increase efficiency, and speed up the time to market.

The DevOps strategy promotes collaborative and iterative collaboration between development and operations teams throughout the whole SDLC, from planning to evaluating to deployment. By doing this, the finished product is guaranteed to be of excellent quality and to satisfy the needs of the users.

How Does DevOps Change the Role of Traditional Testers?

The rise of DevOps has changed the way development and testing is approached, and as a result, it has impacted the role of traditional testers in the following ways:

More Collaboration and Cross-Functional Involvement

With DevOps, coordination between development and operations teams is given increased importance. This means that testers are no longer just responsible for testing, but also for working with developers to ensure that code is properly tested before it is deployed.

Increased Focus on Automation

Automation is a key component of DevOps, which uses it to improve the delivery process. This means that testers will need to have a greater understanding of test automation tools and be able to implement them effectively.

More Involvement in The Software Development Lifecycle

With DevOps, it is no longer just a separate phase that occurs after development is complete. Instead, it is integrated throughout the entire SDLC, and testers will need to be involved in planning, design, development, and deployment stages.

More Emphasis on Continuous Testing

DevOps involves continuous integration and delivery, which means that programs are released in smaller, more frequent increments. To make sure that new features and upgrades are thoroughly tested before they are deployed, testers must do continuous tests.

Greater Focus on Soft Skills

With DevOps, there is more emphasis on collaboration, communication, and teamwork. This means that testers will need to have strong communication and interpersonal skills to effectively work with cross-functional teams.

Approaches Used in DevOps for Software Testing

It involves using various approaches and techniques to streamline the process and improve the overall quality of products. Here are some of the different approaches used :

Continuous Testing

This is done throughout the entire SDLC, from planning to deployment. This strategy uses automation to quicken the process and boost effectiveness.

Test Automation

It is absolutely dependent on test automation. It involves using tools and scripts to automate processes, such as regression , functional , and performance . Testers may execute tests more quickly and accurately with the use of test automation, which also aids in finding problems early in the SDLC.

Shift-Left Testing

This involves checking applications early in the development cycle. This approach allows testers to identify and fix issues before they become more complex and costly to fix later in the development cycle.

Exploratory Testing

It involves using manual techniques to explore and uncover issues that may not be identified through automated methods. This approach is particularly useful in DevOps because it allows testers to identify issues that may be missed by automated method.

Risk-Based Testing

It involves identifying the highest-risk areas of an application and focusing on those areas. This approach helps to ensure that the most critical areas of the application are thoroughly tested, while also reducing time and effort in areas that are less important.

Conclusion

In conclusion, the rise of DevOps in software development has had a significant impact on the role of software testing. It has brought about a shift in the traditional approach, with more emphasis on automation, continuous tests, and collaboration between developers and testers. All these methods have helped to improve the efficiency and effectiveness overall.

By implementing DevOps, organizations can deliver software products that are of high quality, meet user needs, and are released quickly and efficiently. As the demand for faster and more efficient software delivery continues to increase, their adoption will become even more critical.