What a Test Automation Maturity Model Actually Looks Like

- March 26, 2026

- Nabeesha Javed

Just like every CTO, you want to deliver the highest-quality product on your end.

“The sooner the better.”

Your test automation is supposed to make your product ship faster and build better. Before the funds are poured, you need to know that most organizations get automation testing only partly right. They write the tests, set up the pipeline, and watch the green builds roll in. For a while, it works.

Suddenly, one script fails, and the test suite (nobody’s primary responsibility) starts falling behind. Engineers pass judgment, and you are stuck in the spiral of resolving the issues.

Test automation has existed for years now…

Most companies know what to do, but most still can’t execute at scale

The problem is the gap between planning and real implementation

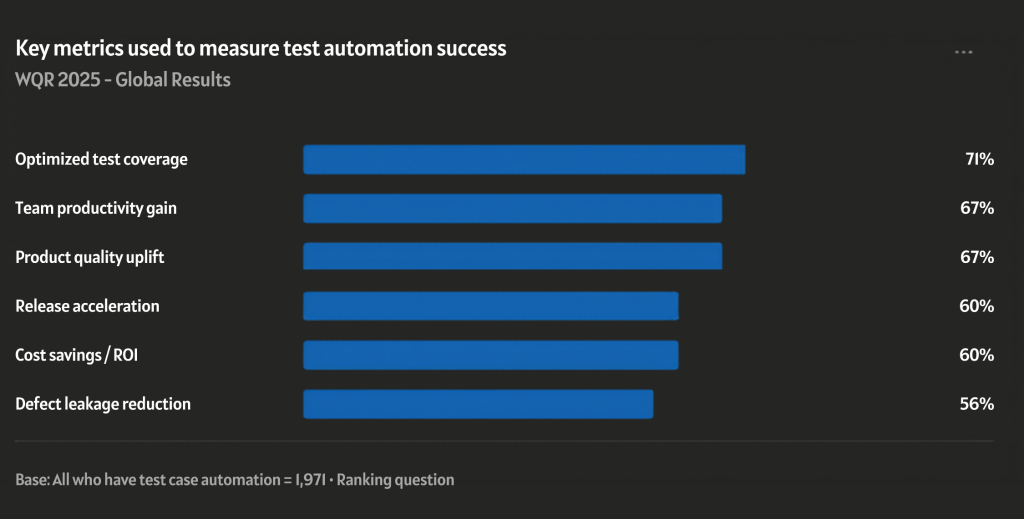

According to the World Quality Report, 42% of organizations report that their test automation did not deliver the expected ROI. The same report shows that the QA teams with mature automation delivered 40% faster and reduced post-release defects by up to 35%.

That’s exactly where the automation maturity model comes in.

What is the Test Maturity Model (TMM)?

Test automation maturity is a structured way to measure how

- Systematically

- Consistently

- Reliably

An organization uses automated testing across its software delivery lifecycle. Also known as TMM, answers “how smart, fast, and trustworthy is that automation?” which is critical for C‑level decisions on speed, risk, and cost.

Why is it Critical Right Now?

It maps how far your organization has moved from scattered, fragile scripts toward a predictable, integrated, and self‑improving automation system that can keep pace with CI/CD and product‑team velocity.

Why it matters for engineering leadership:

- Data from Shftrs shows that organizations with mature test automation typically see 40–50% faster release cycles because quality is enforced earlier and more consistently.

- Silent compounding: every small improvement in test design, coverage, and integration pays off over time through shorter feedback loops, fewer regressions, and safer refactors.

- The maturity gap: surveys indicate most teams sit between Level 2 (Managed) and Level 4 (Quantified), meaning they automate but still struggle with flakiness, maintenance, and trust.

A trusted automation maturity model gives the CTO:

- A scorecard to benchmark the current state against industry practice

- A roadmap to increase build speed, reduce regression risk, and ensure quality gates are reliable and predictable.

- A governance framework to fund the right investments (tools, skills, CI/CD integration) and measure ROI in terms of time‑to‑market and defect escape.

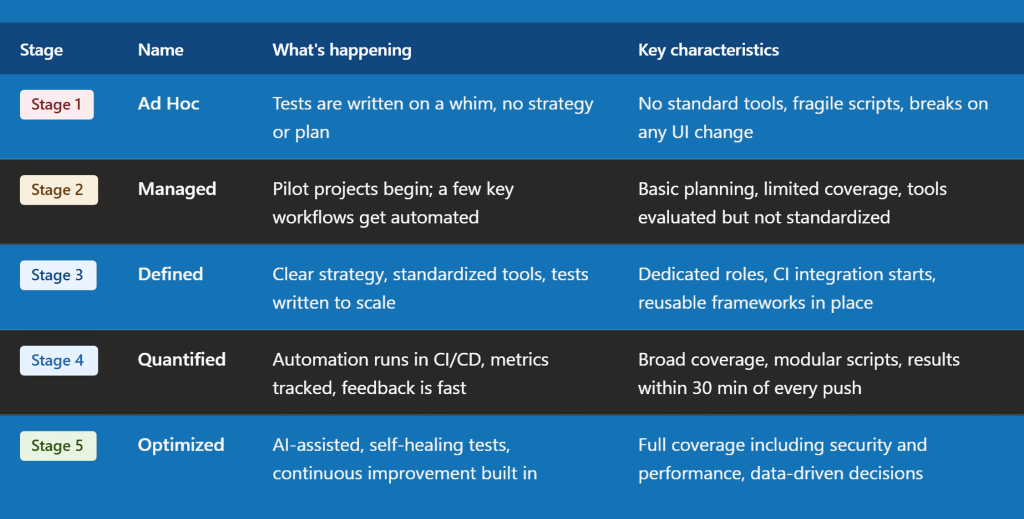

Levels of Maturity Model

If you look at this model, it shows where your automation stands today and what to fix next.

At the start, teams mostly do manual testing with a bit of automation. As you improve, you bring in standard tools and processes. This makes testing more consistent and easier to manage. At higher levels, automation becomes part of your delivery pipeline.

Testing runs continuously, and you can release faster with more confidence. At this stage, cost of quality becomes clear. Fixing issues early is cheaper, faster, and reduces risk.

5‑pillar framework L1–L5 view

A strong 5‑pillar framework for test automation maturity typically covers:

- Strategy & governance

- Processes & practices

- Tools & frameworks

- People & skills

- Metrics & continuous improvement

1. Implement Cross-Functional Automation

Most teams treat test automation as a QA responsibility. That’s the first mistake. When engineering, product, and leadership all have a stake in what gets automated and why, the program starts driving real release confidence.

- At L1–L2, automation decisions happen reactively.

- By L3 and beyond, there’s a shared council CTO, engineering, QA, product reviewing automation scope like a product backlog.

- Most helpful from L2 to L3. Start by putting automation priorities on the same planning board as feature work.

Companies that do this report up to 40% fewer critical-path regressions at release.

2. Test Design Reviews

When writing tests at earlier stages, engineers tend to write tests that just pass, but they are not structurally made to last for longer execution. These scripts are tied to the UI, which is mostly brittle and rewritten every time something changes.

But this doesn’t mean that you start writing more test cases. It’s the better-designed ones. Adding test design reviews in sprint planning with reusable patterns, clear entry and exit criteria, and modular structures transforms maintenance into a routine that checks every block. This is mostly helpful at L2 to L3.

For example, one of our e-commerce clients here at the Kualitatem team cut their build times by 40% simply by refactoring fragile UI scripts into a shared, parameterized framework. If you also want to refactor your UI scripts, then connect with our team to learn more about this transformation.

3. Only Have One Primary Framework

Multiple teams using multiple tools is not flexibility; it becomes fragmentation. When every squad has its own distinct scripts, its own selectors, and its own way of running tests, nothing scales, and nothing is trusted.

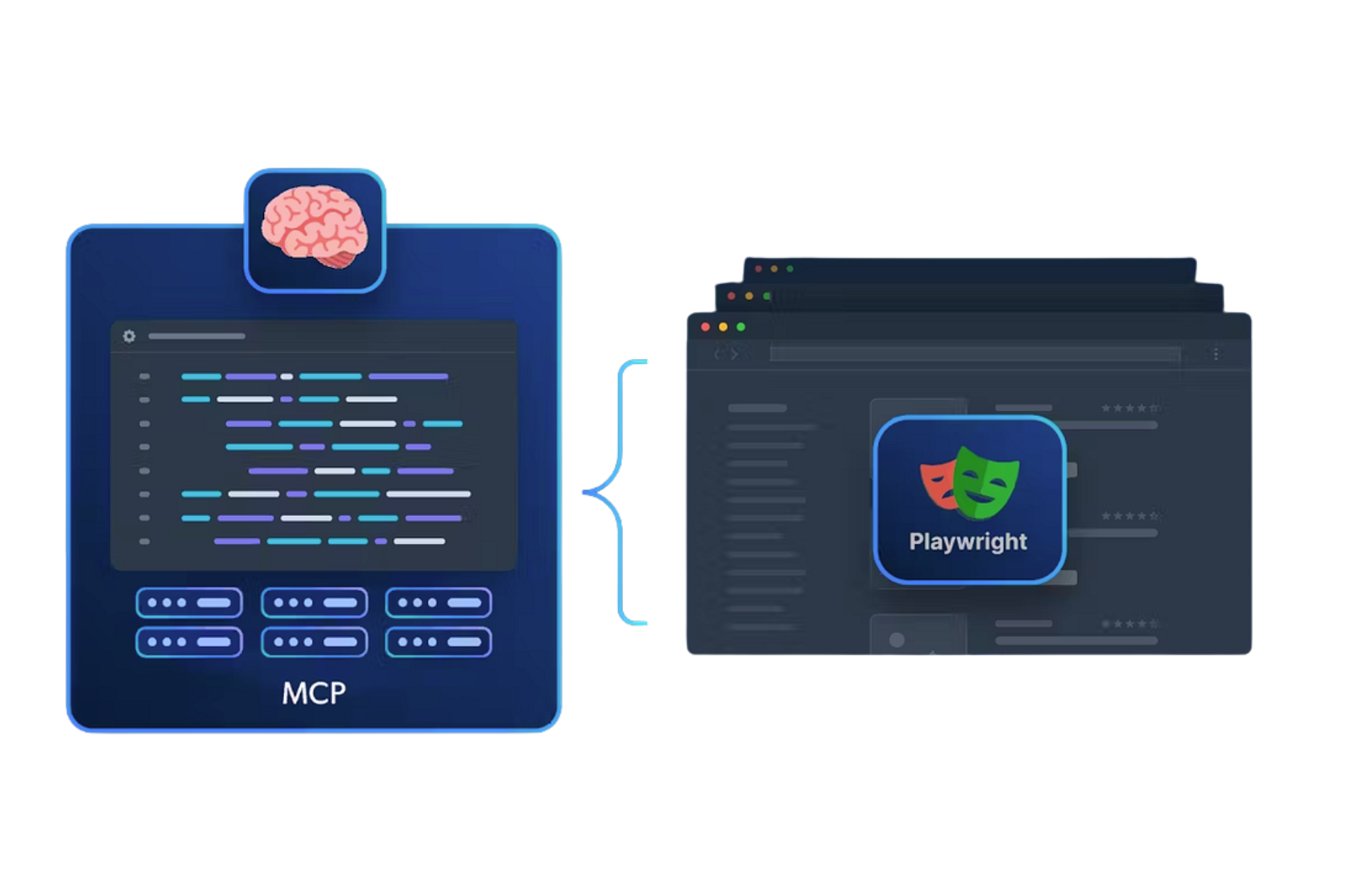

Standardizing on one framework is the optimal solution. Playwright, Cypress, and Selenium with shared libraries and internal documentation give every team a common language. Most helpful at L2 to L3.

4. Add Dev into Your Testing & QA into Dev

When QA is a separate function with no coding ownership, and developers have no stake in test quality, the suite drifts. The healthiest automation programs treat testing as a shared engineering discipline. This means creating clear career paths for automation engineers alongside developers, embedding QA in product squads, and co-owning CI/CD pipelines. This is mostly helpful at the L3 to L4 level.

At Kualitatem, our team opreats as a lean team working outside of silos, which helps us reduce flaky tests by 70% after pairing QA engineers with backend developers in dedicated test-maintenance sprints.

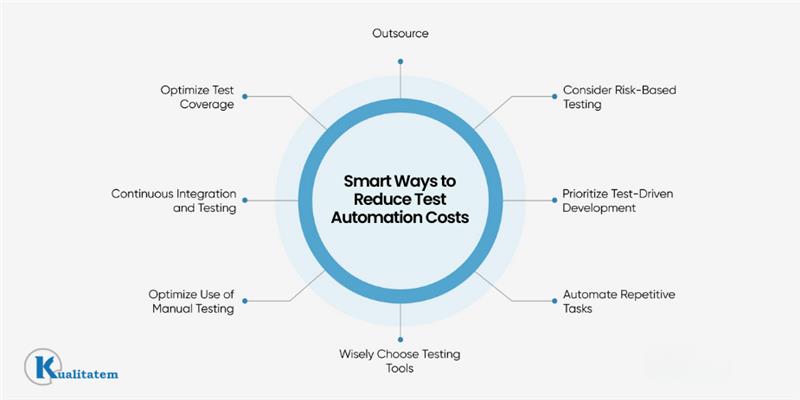

5. Invest in Quality Dashboards

You cannot improve what you cannot see. Most teams at early maturity levels either track nothing or track the wrong thing, like total test count, which tells you almost nothing about suite health.

A proper quality dashboard shows flakiness rate, mean time to fix a failing test, defect escape trends, and ROI per test suite. This gives leadership something actionable, not decorative. Most helpful at L3 to L4. Here are some KPI’s your dashboard must measure in order to get outcomes. Here is the list of common KPI’s most CTO’s measure to check their test automation.

If you are at the initial stage, just keep this 3 Step plan, which our team at Kualitatem implements to create the best mature test automation plan.

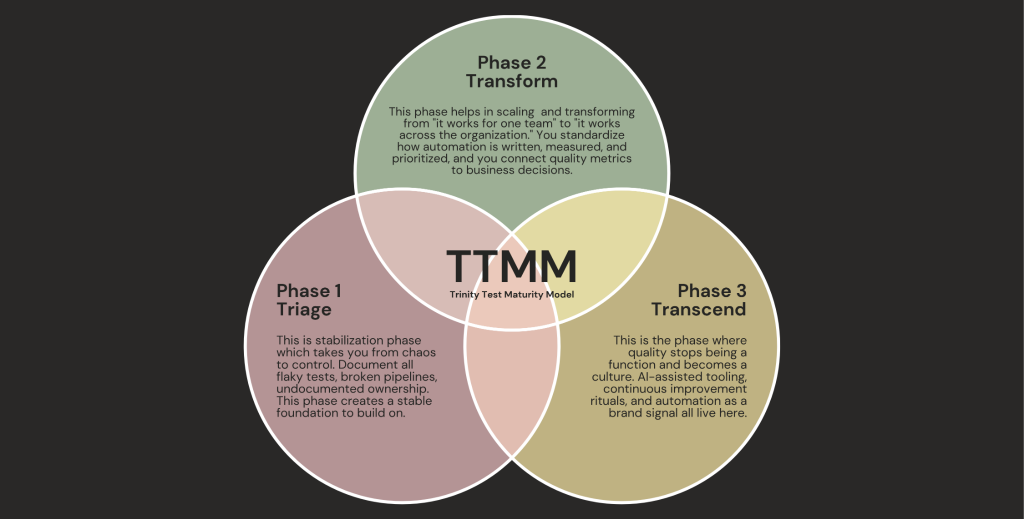

3-phase Trinity Test Maturity Model (TTMM)

The Trinity Test Maturity Model uses the three phases Triage, Transform, and Transcend to guide organizations from unstable automation to enterprise-wide, AI-driven quality practices. Together, they create a clear, structured path for a perfect test automation maturity program.

Phase 1 – Triage

- Document flaky tests.

- Fix broken pipelines.

- Assign ownership for every test.

Phase 2 – Transform

- Standardize how tests are written, measured, and prioritized.

- Connect quality metrics to business decisions.

- Automation works organization-wide, not just for one team.

Phase 3 – Transcend

- Make quality part of your company culture.

- Use AI-assisted tools.

- Practice continuous improvement.

- Treat automation as a brand signal.

- Quality isn’t just a task; it’s how your organization operates

To implement the TTMM model, you can take help from Kualitatem’s expert teams to have a more mature test automation program, which reduces test flakiness up to 40%.

Benefits of TTMM (Trinity Test Automation Maturity Model)

- High‑maturity teams report 40–50% faster release cycles due to automated regression and CI‑driven gates.

- Build and test execution times can be reduced by 30–60% after standardizing frameworks and removing flaky tests.

- Organizations with a defined maturity model see 20–30% lower QA‑related re‑work costs over 12–18 months.

- Defect‑escape rates often drop by 30–60% as automated coverage and quality gates become more reliable.

FAQ

1. How do I know my organization is at L2 vs L3?

If tests are scattered, ownership is unclear, and you only trust a few smoke tests, you’re likely at L2. At L3, you have a shared framework, clear entry/exit criteria, and tests are integrated into CI/CD gates so teams actually rely on them.

2. Can’t we just buy a tool and call it ‘mature automation’?

No. Buying a tool only moves you from L1 to L2 at best. Maturity happens when you add governance, standard processes, and shared ownership; otherwise, you just trade manual flakiness for automated flakiness.

3. How much time should we spend on automation vs features?

A healthy balance is treating automation as part of your core feature work roughly 15–25% of planning effort in early stages, then rebalancing as you reach L3+ and automation starts cutting re‑work and regression time.